On September 11, early in the morning, my friend called me in panic, “BlackMamba attacked more than 10K students in our University.” I was shocked but surprised, “That’s not possible. Do we get these in Europe?” He clarified, “I am talking about the AI-based malware that we were discussing the other day. Can you help?”

I was a bit perplexed but can understand the gravity of the situation. By the way, I used this simulated conversation to set the context for potential threats that we will face in the near future.

Incidentally, “BlackMamba” is really causing significant damage to various businesses, including financial losses and damage to their reputation. “BlackMamba” can dynamically alter its own code each time it executes, bypassing endpoint detection software, and remains hidden. It then infiltrates targeted systems via phishing campaigns or software vulnerabilities. Once installed, the malware steals sensitive information, leading to data theft or even impairing the IT Infrastructure.

The recent cyberattack on the University of Duisburg-Essen that shut down the entire IT Infrastructure, including the internet, was one such attack. Another incident was the ransomware attack on Munster Technological University. These are just a few incidents that rocked the cybersecurity space in Education technology.

Let’s roll back for a moment. Till now, industries have chosen technology at different paces; however, the situation was different this time. Adoption of emerging technologies like AI and cloud computing were instantly adopted by industries. They were eager to compete from the very beginning. While technology makes our lives easier, easy access to shared information also affects many legal issues for businesses.

The flip side of this fairy tale is that “Fast-paced digitalization makes businesses vulnerable to cyber-attacks.”

The internet has connected our world, and cybercriminals are exploiting the information in a connected world for fraudulent purposes. In addition, AI also enhances the efficiency of hackers, making it easier to automate these crimes, lower the entry barrier, and scale up the attacks beyond the capacity of our current cyber defense systems. Many businesses are developing advanced security systems that can identify and prevent threats in real time to counter this challenge and protect themselves.

It’s time we evolve our security systems from submissive observers to practical responders.

So, what about Industries thriving in the L&D and Assessment Domain?

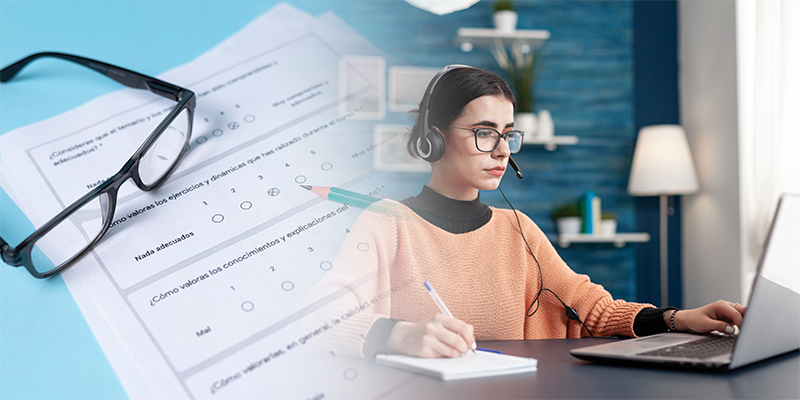

This time, we folks from L&D and Performance Assessment started early, and we found these newer technologies to be the game changers. The tech and operational nuances were discussed and strategized at all levels. Every stakeholder agreed that maintaining the security of online learning, online tests, online exams, and online assessments is vital for operations. Security plays a big role in ensuring accurate measurement, but it also protects the organization’s intellectual property and brand integrity.

Implementing new-age security systems ensures that the outcomes produced by Learning and Assessment systems are fair, reliable, and valid. It also endorses that any credential, certification, license, or qualification has been achieved honestly.

Stakes are really high, and L&D teams are seriously looking for partners who can help them with their queries. Let me share a gist of common queries from the industry.

Almost all of them wanted to know about new developments to ensure test security, for example, detecting any test misconduct. Around 80 percent of the people were worried about how to validate a test taker and ensure uniformity across remote and in-person testing. There were some queries on operational aspects like, “How to prevent a test taker from sharing test items or forms online?” and “What type of secure online remote tests, exams, and assessments are used in education?”

In addition to these, there were some strong queries on policy implementation, such as “Preparation and communication of a strong test security policy,” “Need of a standard guideline document on security measures on how to prevent misconduct on test day,” and “A list of secure testing measures that I can use during test design and development.”

But the question that is echoing everywhere is, “How can AI help students and teachers with assessment?

In the end, Test and Assessment is all about “conduct” and “misconduct.” However, the fear of misconduct shows its veracity. There are various forms of misconduct, such as getting an illegal copy of content before a test, copying answers from another test taker, or using someone else to take the test. There are many other examples. Imagine a scenario where a hacker uses generative AI tools to make apps that search the internet and create fake profiles of the targets. They can make fake websites that trick people into giving their credentials or make many websites with small differences from the others, increasing their chances of bypassing network security tools. Think about the impact of this on a University where Digital learning and assessment are at risk. All the data and processes are vulnerable.

That’s scary! You need to stay safe out there!

Technology providers should address these challenges by preventing and outpacing fraudulent practices and ensure the validity of services administered by addressing security risks and threats.

So the question is, “How are we exploring Security Measures in E-Assessment?”

My Journey with Security

It’s almost 25 years since I got exposed to security. It might look outdated, but revisiting it with the present-day context might help connect the dots.

My first exposure to the term security dates back to 1996, when I was advised, “Never permit the undeserving to acquire unexpected advantage. This will reduce vulnerability or hostile acts and enhance freedom of action for the deserving ones.” Getting exposure to the Reveal-Secret-Security philosophy was an eye-opener, and it helped me immensely to carry out the operations that looked vulnerable.

Almost ten years later, sometime around 2006, I had a chance to share these inputs with my Principal architect, who was designing a performance assessment tool for a government project. The outcome of our discussion was to build a robust solution that:

- Doesn’t Reveal – Plug Exposure, Leak, and Giveaway

- Keeps Secrets- Assign seals for Classified, Restricted, Confidential

- Maintains Security- Enforce and Confirm Certainty, Safety, Reliability, Dependability

In a week’s time, we were ready with our wireframes for a client demo. The presentation went as planned, and the prospect looked impressed. He said, “Friends, the stakes are really high, and we are not in a business of trust. We are looking for transparency. You guys do attract my attention, but here are my two cents. I want you guys to consider assessments as an open practice that endorses legitimate inference. Assessments must promulgate an Information Assurance model and employ multiple measures of performance. Lastly, assessments should measure what is worth learning, not just what is easy to measure.” He finished off and requested a timeframe from us to develop the manifestations, which can be evaluated at their end for requirement alignment. We asked for a week’s time to prepare for the response.

For us, the challenge was to analyze these pointers and break them into consumable pieces. By the end of day one, we came up with a list of parameters that map to the requirement. The rest were sleepless nights, but we were able to define and build the components that addressed the security challenges residing within the client’s requirements.

Let me detail it for you. This might be long, but you will find it interesting.

To implement the first one, “Assessment must be an open practice,” we need to address Physical Security, Human Security, Application Security, Code and Container Security, Assessment and Test Security, Critical Infrastructure Security for Network, Database, and any Third-party Device.

For the next one, “Assessment must endorse legitimate inference,” we need to work on Data Security and Data Access, Data minimization, Data Handling, Data Protection, Data Classification, Data Discovery, and Data Governance.

To implement the third one, “Assessment must promulgate Information Assurance model,” we need to define and implement Informed consent, Assurance of Availability, Protection of Confidentiality, Protection of Test Integrity, Protection of Authenticity, Non-repudiation of User Data, and Transparency Assurance.

The fourth one, “Assessment should employ multiple measures of performance,” had a lot of subjective components such as assessment validity with respect to learning objective, Authenticity of learner’s performance and work relevance, Sufficiency to judge the coverage of learning outcome, Reliability to track performance over a time-span, and life cycle of Terminal and Enabling Objectives. All of these are crucial to help businesses define measurement indicators, evaluation metrics, decision sequences, and classification of Responses.

The last one, “Assessment should measure what is worth learning, not just what is easy to measure,” has a lot of dependency on the business objectives. It covers Benchmark Learning with Performance, Benchmark Learning Content Mapped to Cognitive Load, Benchmark Learning Actions Mapped to Performance Skills, Map Test Item with Objective Domain, Map Test Bank to Performance Skill, Benchmark User Experience of Publishing Platform, Validation of the Decision tree with Respect to the Objective Domain, and Restrict Bias for Cognitive and Performance Skills.

These pointers are still relevant for various assessment interventions. It is essential to adopt principles like these to make sure that emerging technologies achieve their economic potential and don’t go rogue, undermining accountability, affecting the vulnerable, and reinforcing unethical biases. I use these principles to design my core security strategy. I also use emerging technologies along with my core strategy to create learning and assessment interventions that are strong and performance-ready.

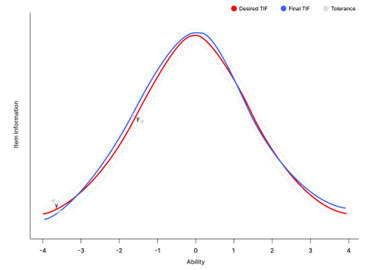

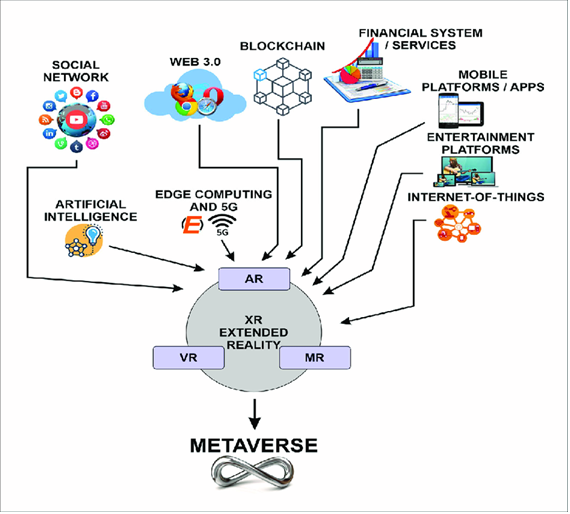

Some key technologies have gained a lot of popularity in the last two years, for example, using Generative AI to eliminate algorithmic and human bias, using AI-powered augmented proctoring for online exam supervision, using Generative AI models to create new assessment formats, using AI-driven machine learning algorithms to produce complex essay responses, using AI-powered adaptive secured testing methods to improve the fairness of assessments, using AI-driven algorithm to provide personalized feedback system that detects learning gaps and offers targeted interventions and many more.

In addition, collaborative research on using Geo-fencing and Blockchain technology will revolutionize the assessment business. The power to make secured Question Bank Vaults with Geo-fencing, Time Stamps, Bio maps, Captcha loggers, Wearable bands, Proximity scans, and Sentiment analyses is a reality and will be realized by 2025.

My mentor once said, “The baseline for assessment is accuracy, and the accuracy of assessment result depend on assessment security.

For more information on our pursuit, reach out to us at connect@excelsoftcorp.com

Explore this and many such interesting articles at the eLearning Industry

Read these blogs to discover the latest insights on online assessments

· How to Ensure the Security of your Test Content

· Latest Trends and Developments in Online Assessments

· Exploring Potential of Learning Assessments in Metaverse

· A Tactical Guide For Transitioning from Paper-based to Online Testing